Modern CPU Architecture

Why we need GPU to run the training and inference? Are there other ways?

Until I’m writing this article, I already have some experience on many of the ML tasks. And if I do not have a GPU, so I cannot do any ML tasks?

Note: All the images are from the acknowledge section articles.

How the LLMs make decision?

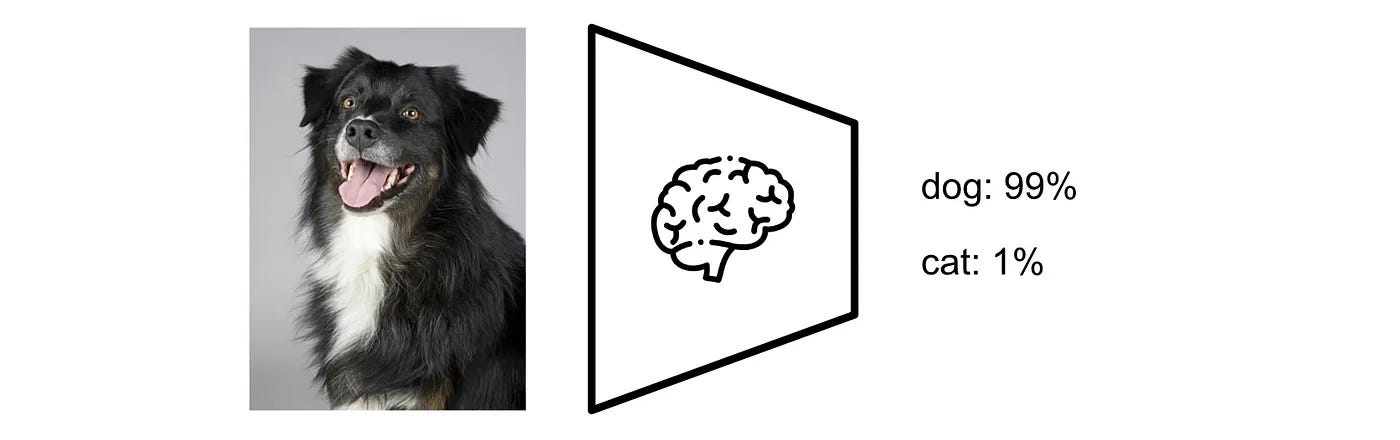

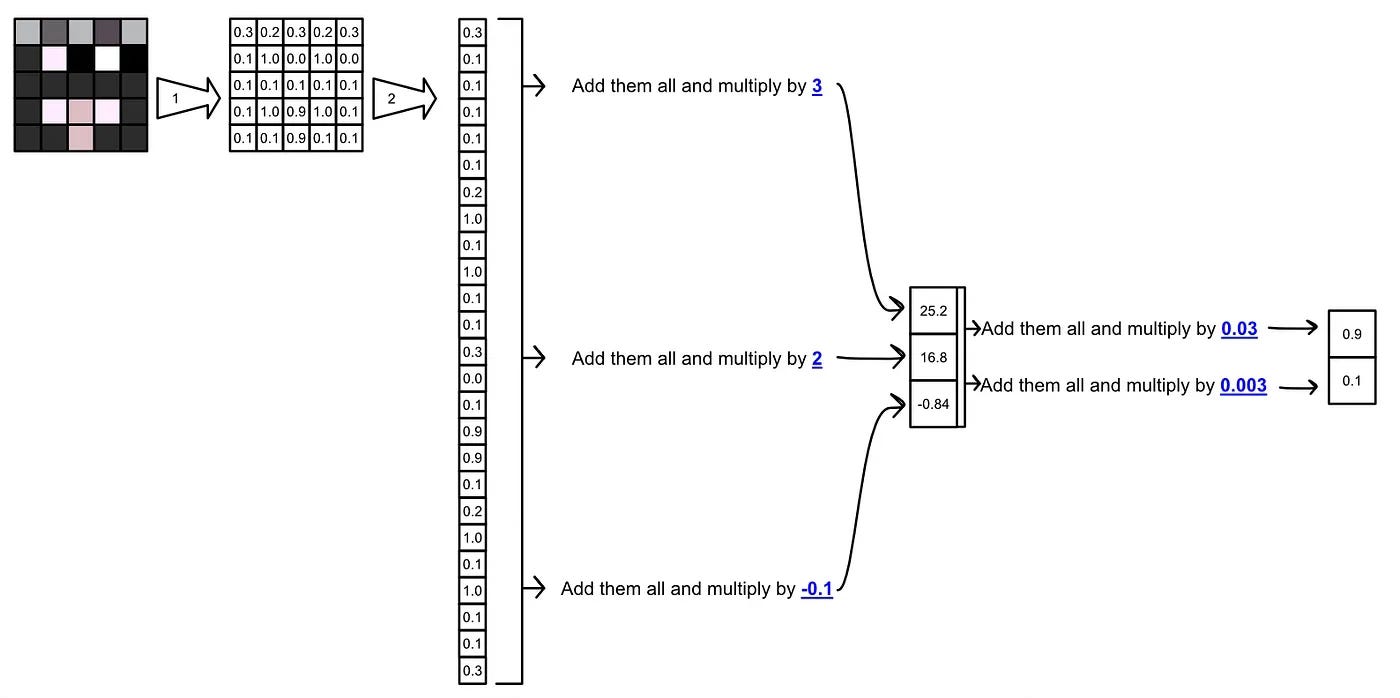

For example, if we want to do a image classification task which likes:

And the process behind this task is a mathematical operation, like:

We can say the LLMs do a ton of simple mathematical computing at once.

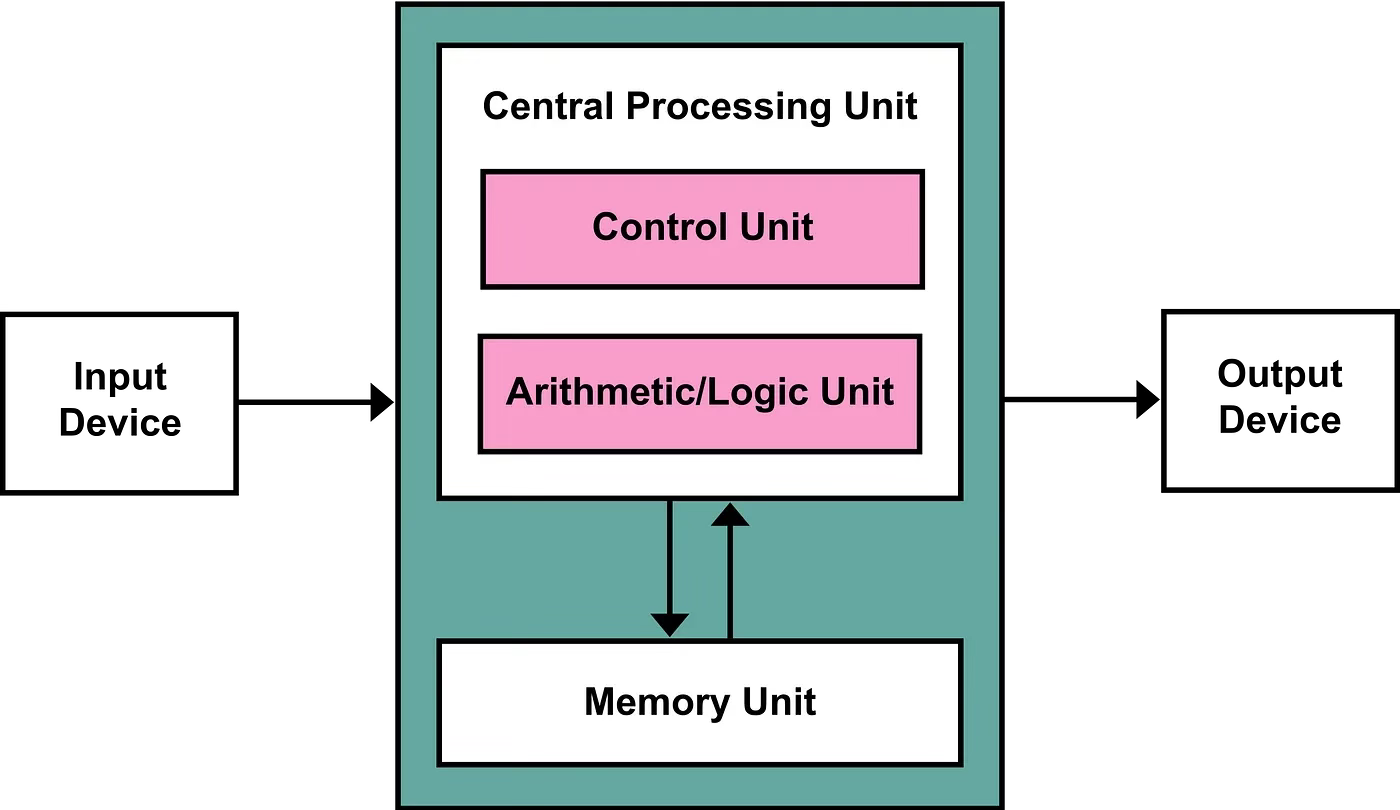

The CPU Architecture

Let’s learn more of modern CPU first. The modern CPU is based on the “Von Neumann architecture”.

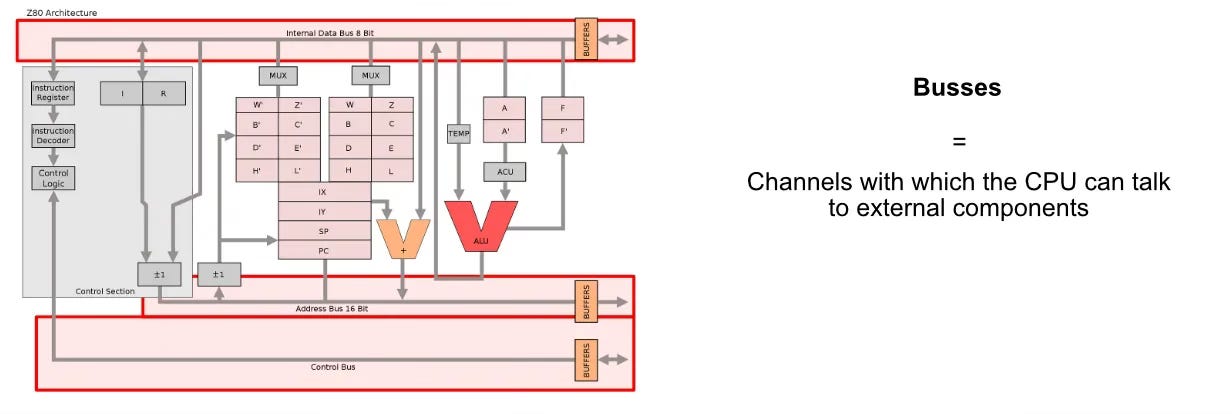

We will use Z80 CPU as an example. Conceptually, modern CPU’s aren’t different than the Z80, so, we can use the Z80 as a simplified example to begin understanding how CPUs work.

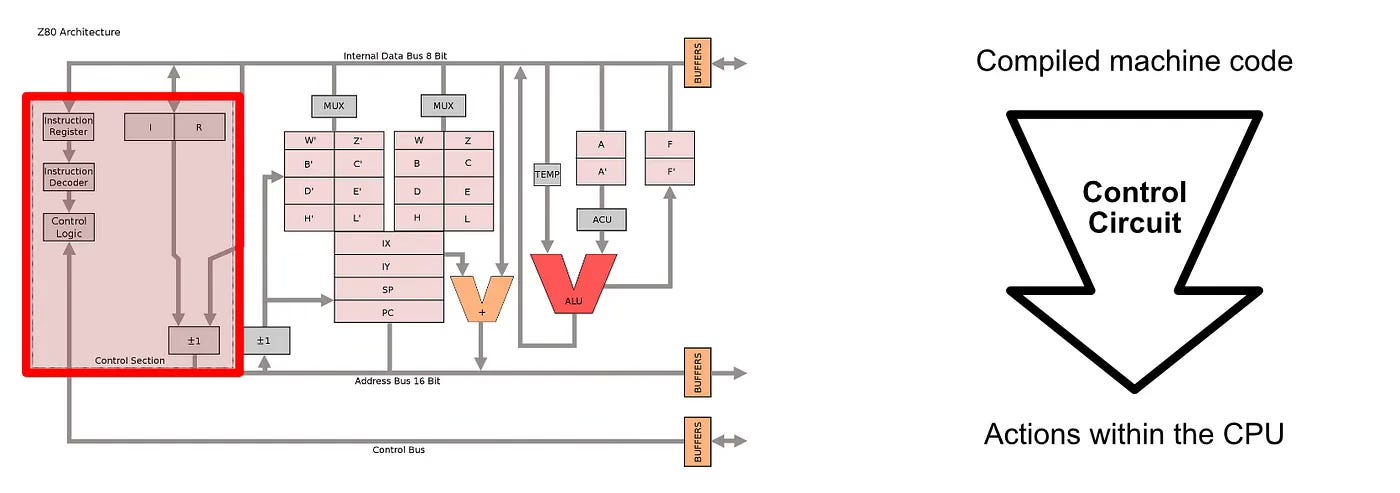

The Z80 featured a control circuit, which converted low level instructions into actual actions within the chip, as well as kept track of book keeping things, like what commands the CPU was supposed to do.

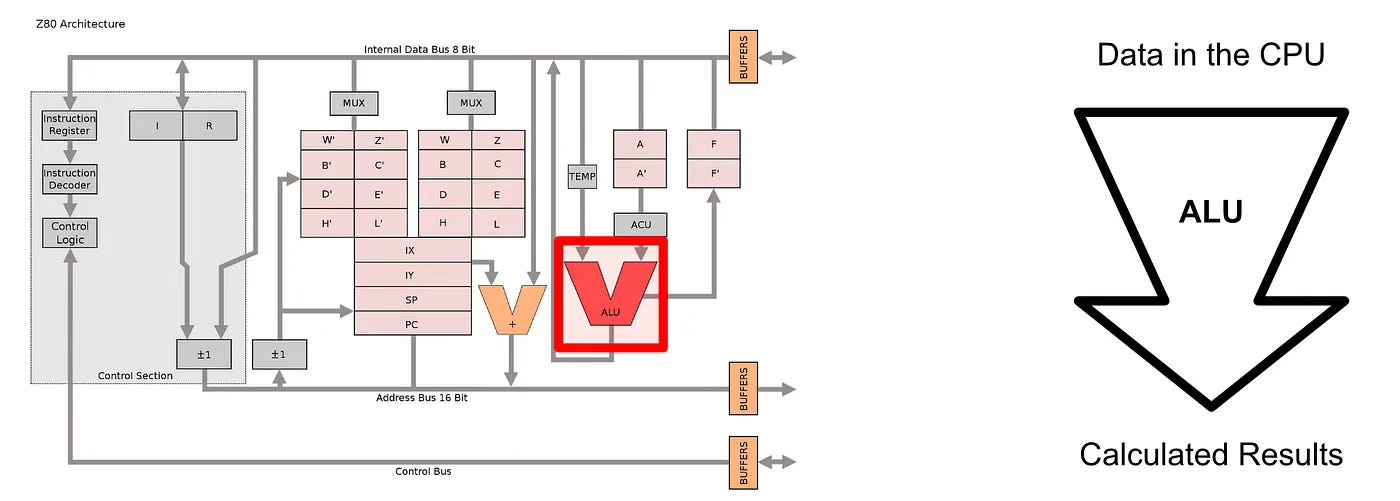

The Z80 featured an “algebraic logic unit“ (or ALU for short) which was capable of doing a variety of basic algebraic operations. This is the thing that really did a lot of the actual computing within the Z80 CPU. The Z80 would get a free pieces of data into the input of the ALU, then the ALU would add them, multiply them, or do some other operation based on the current instruction being run by the CPU.

Virtually any complex mathematical function can be divided into simple steps. The ALU is designed to be able to do the most fundamental basic math, meaning a CPU is capable of every complex math by using the ALU to do many simple operations. For example, below is a complex math can usually be broken up into many simple calculations. This integral(which is from calculus) is just multiplication, division, and addition.

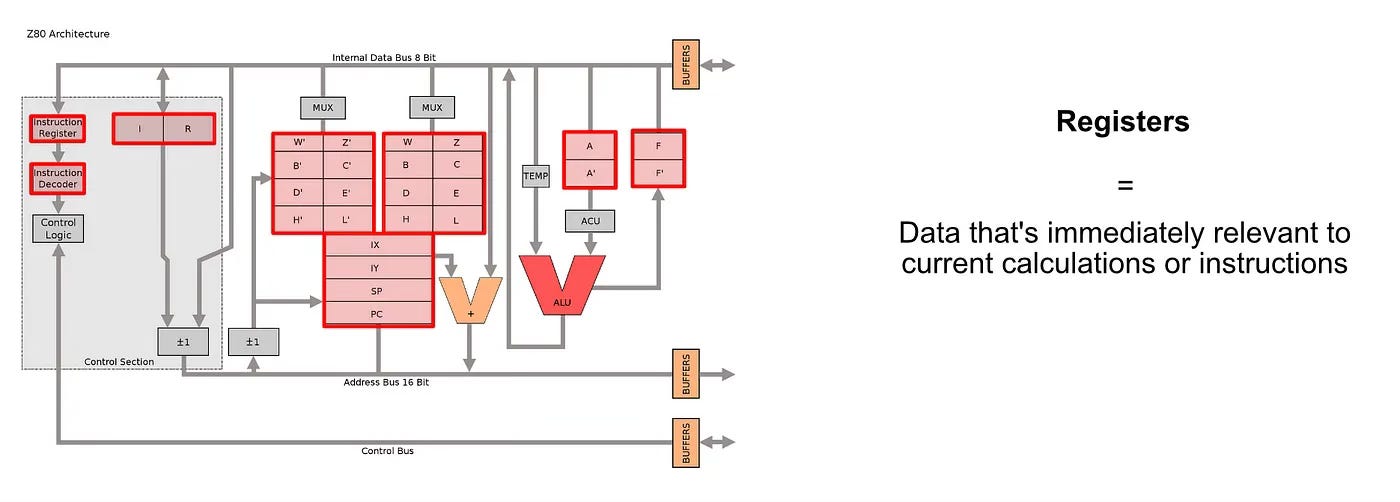

The Z80 also contained a bunch of registers. Registers are tiny, super fast pieces of memory that exist within the CPU to store certain key pieces of information like which instruction the CPU is currently running, numerical data, addresses to data outside the CPU, etc.

When one thinks of a computer it’s easy to focus on circuits doing math, but in reality a lot of design work needs to go into where data gets stored.

The question of how data gets stored and moved around is a central topic in this article, and plays a big part as to why modern computing relies on so many different specialised hardware components.

The CPU needs to talk with other components in the computer, which is the job of the busses. The Z80 CPU had three busses:

The Address Bus communicated data locations the Z80 was interested in

The Control Bus communicated what the CPU wanted to do

The Data Bus communicated actual data coming to and from the CPU

So, for instance, if the the Z80 wanted to read some data from RAM and put that information onto a local register for calculation, it would use the address bus to communicate what data is was interested in, then it would be use the control bus to communicate that it wanted to read data, then it would receive that data over the data bus.

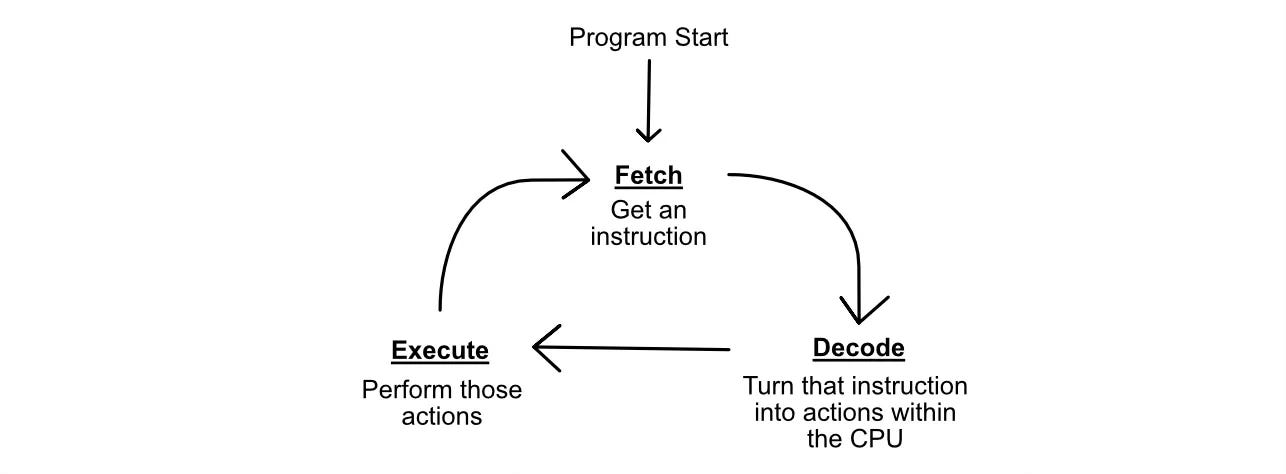

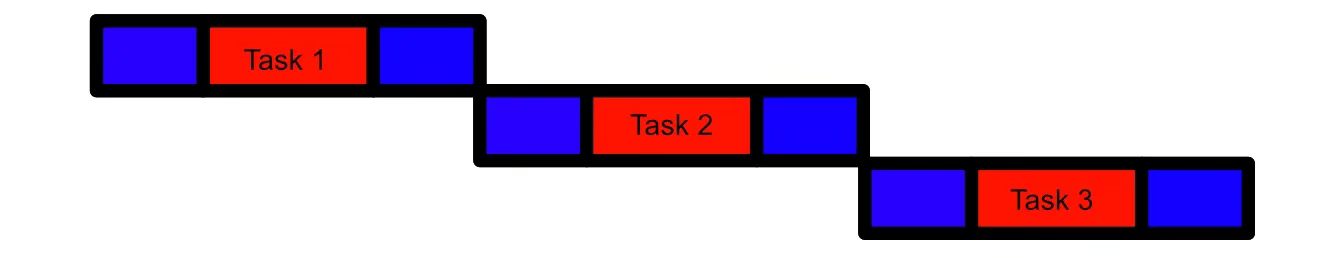

The whole point of this whole sone and dance is to allow the CPU to perform the “Fetch, Decode, Execute“ cycle. The CPU “fetches“ some instruction, then it “decodes“ that instruction into actual actions for specific components in the CPU to undertake, then the CPU “executes“ those actions. The CPU then fetches a new instructions, restarting the cycle.

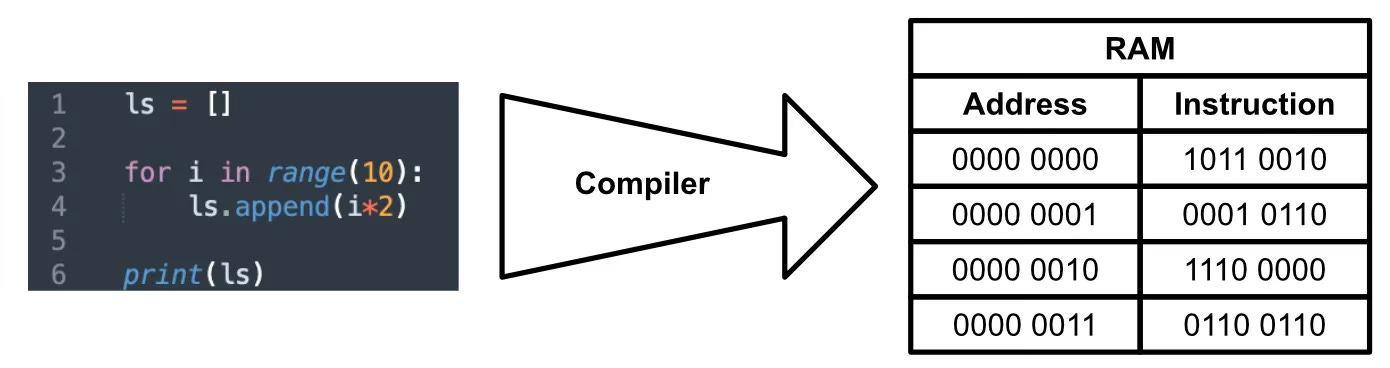

This cycle works in consort with a program. For example, the program is interpreted by a complier into machine code, and that machine code is transmitted to the CPU, a program ends up looking very different.

Once the code has been compiled, the CPU simply fetches an instruction, decodes it into actions within the CPU, then executes those actions.

Design Constraints of the CPU

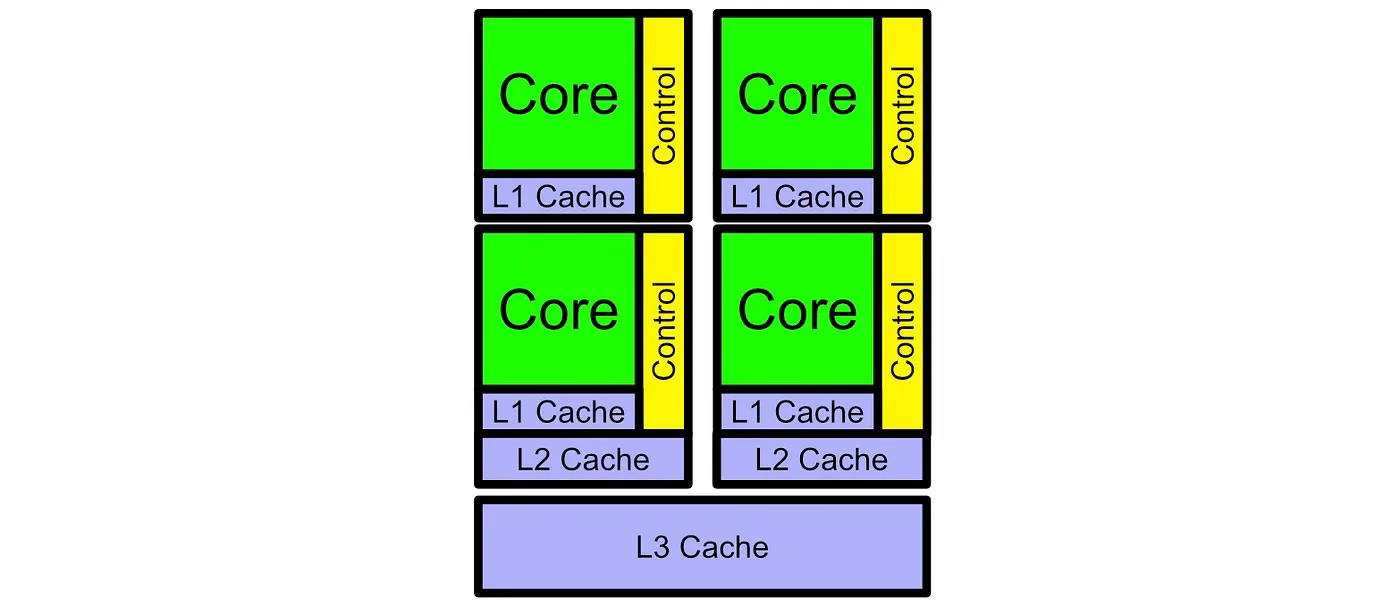

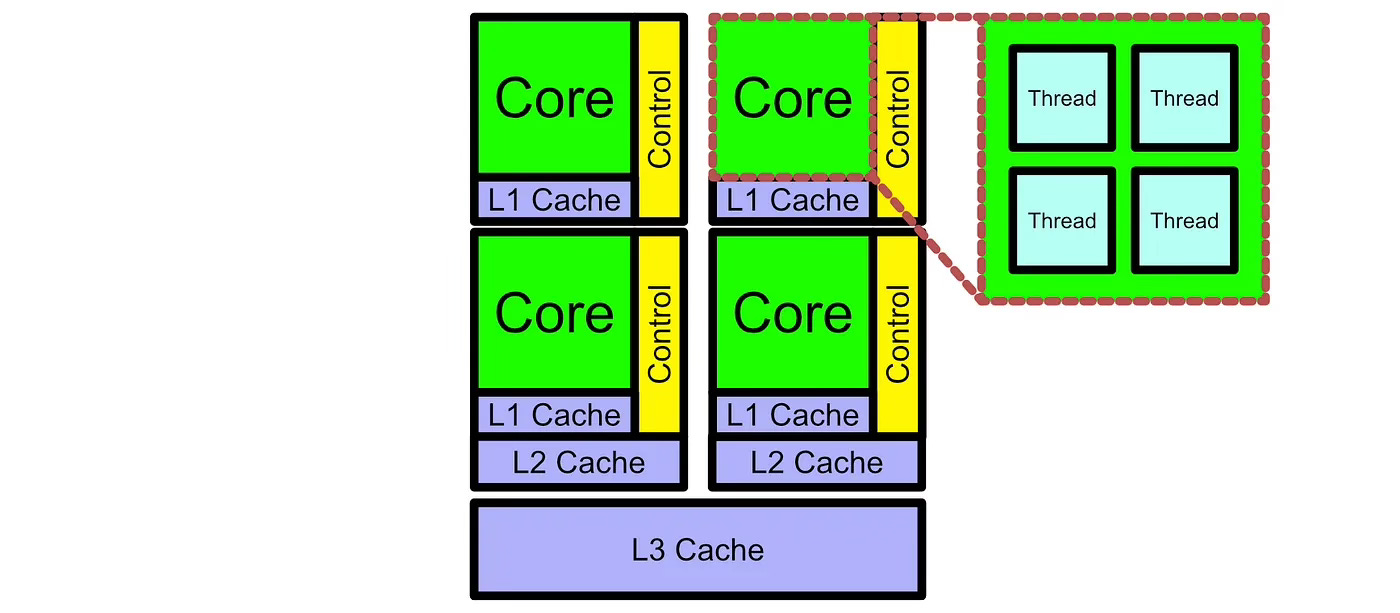

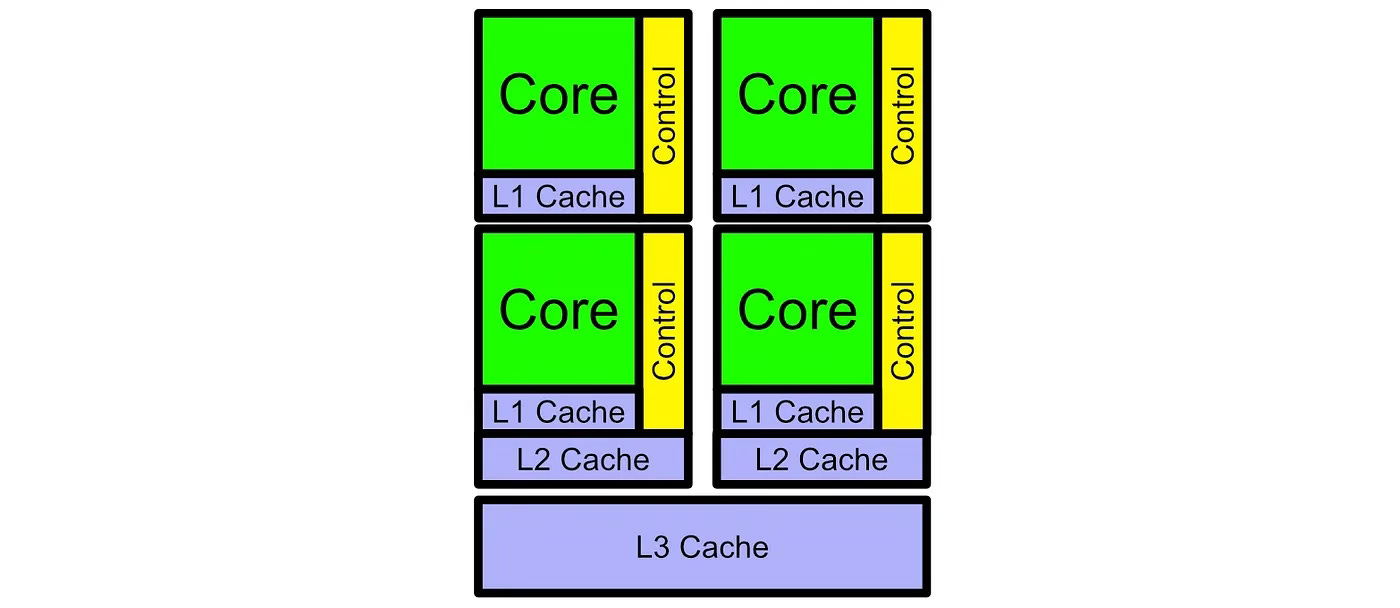

Z80 was a single “core”. The modern CPU is modern core like below:

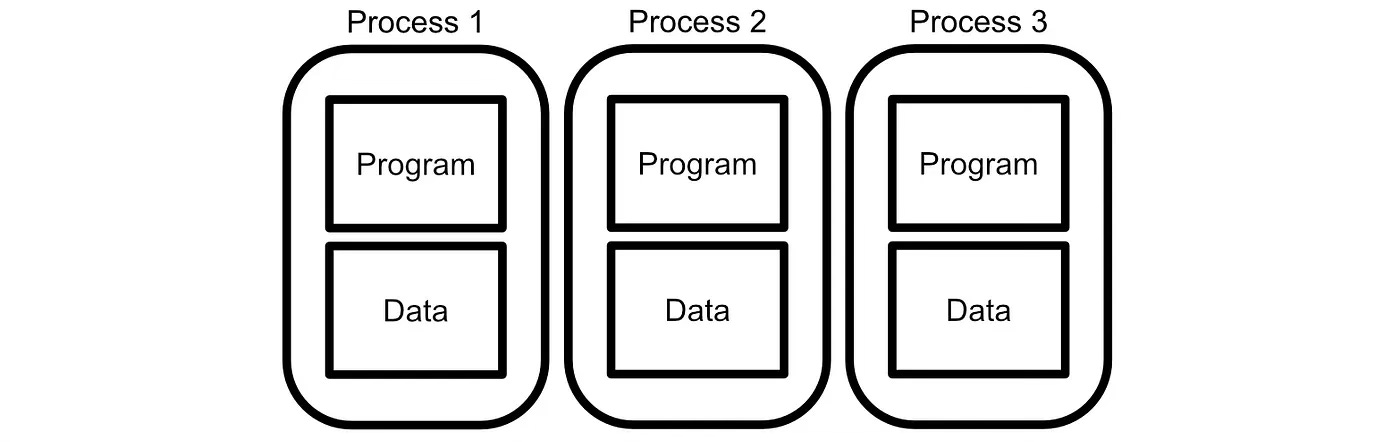

Multiple Processes

It is possible for the cores in a CPU to talk with each other, but to a large extent they usually are responsible for different things. These “different things” are called a “process“. A process, in formal computer speech, is a program and memory which exists atomically. You can have multiple processes on different Cores, and they generally won’t talk to one another.

Multiple Threads

Sometime’s it’s useful for a computer to be able to break up multiple calculations within a single program and run them in parallel. That’s why there are usually multiple “threads“ within each core of a CPU. Threads can share(and cooperatively work together) on the same address space in memory.

So, a CPU can have multiple cores to run operate processes, and each of those cores have threads to allow for some level of parallel execution.

Why we cannot make a huge giant CPU?

To synchronise the rapid executions in a CPU, your computer has a quartz clock. A quartz clock ticks at steady intervals, allowing your computer to keep operations in an orderly lock step. However, modern CPUs can reach into the Gigahertz range.

How the CPUs keep lower latency?

When we are running a program sequentially the amount of latency in running each execution has a big impact on performance.

So, the CPUs attempts to minimise latency as much as possible. For example, the CPUs have a special type of memory called Cache, which is designed to store important data close to the CPU so that it can be retrieved quickly.

In conclusion, CPUs are great for running sequential programs, and even have some ability to parallelise computation.

Acknowledge

https://towardsdatascience.com/groq-intuitively-and-exhaustively-explained-01e3fcd727ab

https://en.wikipedia.org/wiki/Zilog_Z80